You’ve likely experienced it: a once-snappy application slowly becomes sluggish, a seemingly robust operating system starts crashing unpredictably, or a website you frequent develops inexplicable glitches. We often attribute these issues to “bugs” or simply “getting old.” But unlike physical objects that visibly corrode or wear down, software doesn’t physically degrade. It exists as abstract logic, a series of ones and zeros. Yet, the symptoms are strikingly similar to physical decay. What invisible force causes this digital degradation?

The answer lies in a phenomenon often referred to as “software entropy” or, more evocatively, “digital dust.” Imagine a meticulously organized library. Over time, new books are added, some are moved, others are annotated, and sections are re-cataloged, sometimes by different librarians with varying systems. The books themselves remain intact, but the system for finding and utilizing them becomes increasingly complex and less efficient. This growing disorder, this accumulation of minor inconsistencies and inefficiencies, is the essence of digital dust.

This isn’t about code literally disintegrating; rather, it’s about the growing disorder and unintended complexity within a software system. Every modification, every new feature, every bug fix, however small, has the potential to introduce subtle inconsistencies. Over hundreds or thousands of these changes, the system gradually deviates from its initial clean design. This accumulation of non-optimal solutions and quick fixes is often termed “technical debt” – a metaphor for the deferred maintenance that will eventually need to be paid back.

The sources of this digital dust are multifaceted. Internally, a primary contributor is the continuous development cycle itself. Software is rarely a finished product; it’s a living entity, constantly updated, patched, and expanded. Each modification, whether intended to add a new function or fix a vulnerability, can inadvertently create new dependencies, introduce redundant code, or make existing code harder to understand and modify. Furthermore, as developers rotate through projects, knowledge about specific design decisions or workarounds can be lost, leaving subsequent teams to navigate an increasingly opaque codebase.

External factors also play a significant role. The broader technology ecosystem is in constant flux. Operating systems receive major updates, hardware evolves with faster processors and different architectures, and third-party libraries or APIs that a piece of software relies upon are updated, deprecated, or even disappear. Software designed for one environment may become less efficient or even break when exposed to a new one, much like an older vehicle needing specialized parts that are no longer manufactured. Keeping pace with these changes requires constant adaptation, and each adaptation can contribute to the internal complexity.

The impact of digital dust is far-reaching, affecting not just user experience but also the very fabric of innovation. For users, it manifests as frustratingly slow load times, inexplicable crashes, security vulnerabilities, or features that simply stop working as intended. For developers and businesses, it translates into significantly higher maintenance costs. More time is spent deciphering and patching existing, tangled code than on building new capabilities. This drain on resources can stifle development velocity, making it harder to introduce new features or adapt to market demands, ultimately hindering competitive advantage. Large organizations running critical legacy systems, such as banks or government agencies, often face monumental challenges in untangling decades of accumulated digital dust.

Consider the complexity involved in maintaining modern AI models. While the core algorithms might remain stable, the data they’re trained on and the evolving deployment environments can rapidly introduce digital dust. A model might perform excellently on its initial training data but degrade when fed new, slightly different real-world inputs, necessitating constant retraining, data pipeline adjustments, and version control. This ongoing effort to keep sophisticated computer systems relevant and efficient is a testament to the persistent battle against digital decay.

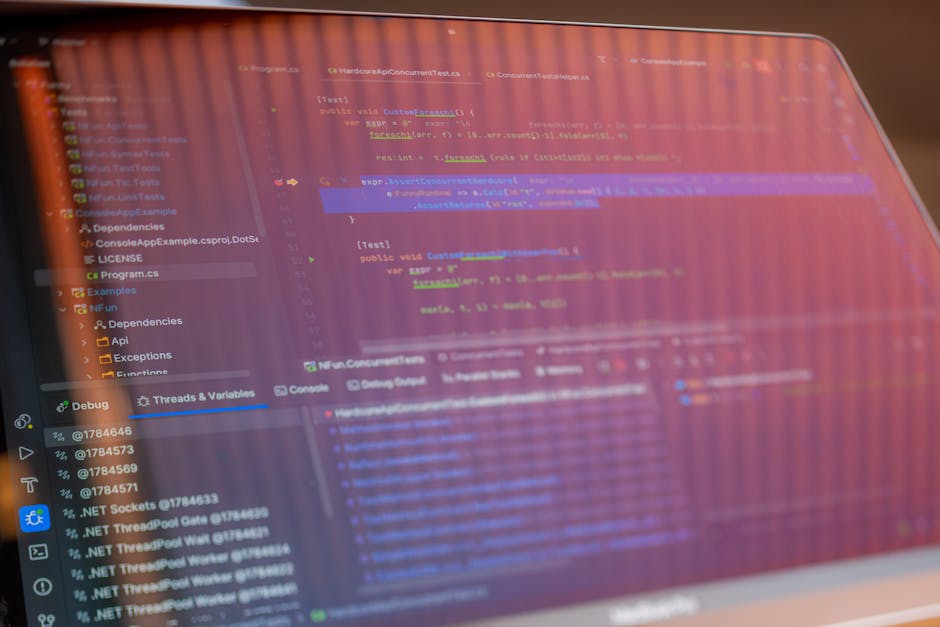

Combating digital dust requires a proactive and sustained effort. It involves practices like regular code refactoring, where developers restructure existing code without changing its external behavior, making it cleaner and more efficient. Rigorous automated testing ensures that changes don’t introduce new bugs. Comprehensive documentation helps future teams understand past decisions. Embracing modular design principles also helps, breaking down complex systems into smaller, more manageable components that are easier to maintain and update independently. These are ongoing processes, not one-time fixes.

Ultimately, understanding digital dust reshapes our perception of technology. It reminds us that software, despite its immaterial nature, is not immune to a form of decay. It demands continuous care, much like a garden needs tending to prevent overgrowth and disorder. The battle against digital entropy is an ongoing endeavor, a testament to the dynamic, living nature of the digital world we inhabit, and a critical component in ensuring the resilience and longevity of our interconnected future.